Table of contents

- What You Should Know Before Reading

- A Single Container Isn’t Enough

- The Limits of Manual Operations

- The Origin of Kubernetes

- What Kubernetes Does for You

- Imperative vs Declarative

- Architecture at a Glance

- Pods, Nodes, Clusters

- Kubernetes Doesn’t Solve Everything

What You Should Know Before Reading

Kubernetes is a tool built on top of several technologies. It’s hard to learn completely from scratch, and having a basic sense of the following topics makes things much smoother.

- Containers and Docker: Kubernetes is a container orchestrator. You need to know what containers are and how images work. The Docker Beginner Series covers the full picture

- Linux Basics: Shell commands, filesystems, processes, permissions (UID/GID). Every article involves

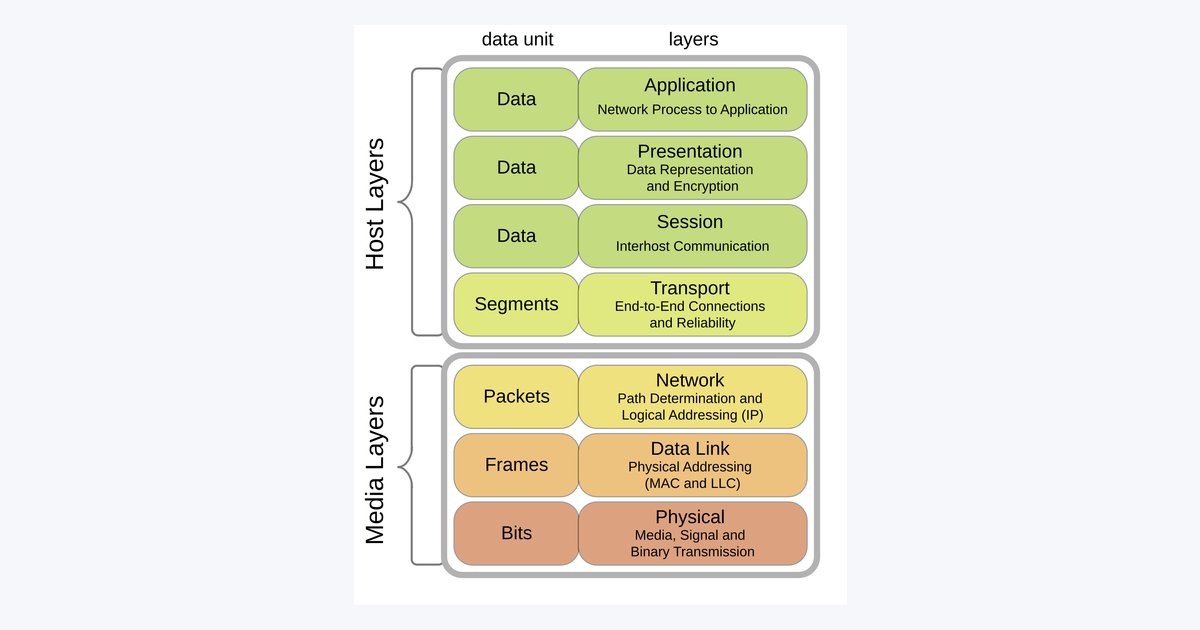

kubectlcommands, and what runs inside containers are ultimately Linux processes - Networking Basics: Concepts like TCP/IP, HTTP, DNS, and ports. Essential for understanding Services and Ingress

A Single Container Isn’t Enough

Think back to the day you first learned Docker. A single docker run nginx command spun up a web server, and the “it works on my machine” problem caused by differences between local and deployment environments vanished completely. In that moment, containers felt like magic.

But the story changes as your service grows. One nginx container, two backend containers, one Redis container, one batch job container. When you have a single server, docker-compose can handle it. But what happens when you have ten servers and one suddenly dies? What if a traffic spike means you need to scale from three backend containers to twenty? Who’s going to do that work?

The moment you answer “a human does it manually,” the operations team’s life becomes miserable. Alerts go off at 2 AM, and SSH-ing into servers to restart containers becomes routine. Containers only solve half the problem. The other half is reliably running multiple containers at scale — the domain of orchestration.

The Limits of Manual Operations

Looking back at the days of running servers without containers, there were problems like these:

- When a server goes down, a human detects it, and a human recovers it

- When traffic spikes, someone provisions new servers, deploys to them, and connects them to the load balancer

- Every deployment means going through the server list and updating them one by one

- Configuration files differ subtly from server to server, turning root cause analysis into archaeology

Containers solved the deployment packaging problem. With a single image, you can run the same thing anywhere. But “automatic recovery when a server dies” and “automatic scaling based on traffic” are not things containers handle on their own. You need a separate system that distributes containers across multiple physical or virtual servers, revives them when they die, replicates them as needed, and distributes traffic.

The system that fills this role is called a container orchestrator. Tools like Docker Swarm, Nomad, and Mesos exist, but as of 2025, the de facto standard is Kubernetes.

The Origin of Kubernetes

Kubernetes is a project that Google open-sourced in 2014. Google had been using an internal cluster management system called Borg for over a decade, and Kubernetes was essentially a reimagining of it for the outside world. The name comes from Greek, meaning “helmsman (keel holder).” It carries the metaphor of gathering container ships and steering them in one direction.

It is currently managed by the CNCF (Cloud Native Computing Foundation) and has become the most central project in the cloud native ecosystem. Major cloud vendors like AWS EKS, Google GKE, and Azure AKS all provide managed Kubernetes services.

In short: Kubernetes is a platform for running containers at scale, reliably, and in an automated fashion.

What Kubernetes Does for You

Kubernetes takes over the work that operators used to do by hand. Here are some key examples:

- Self-healing: If a container dies, it spins up a new one. If a node goes down, it moves the containers to another node

- Horizontal scaling: When CPU usage rises, it increases replicas; when things calm down, it reduces them

- Load balancing: Distributes traffic across multiple replicas of the same service

- Rolling updates: When deploying a new version, gradually replaces the old version for zero-downtime deployments

- Declarative configuration: Describe “what state should exist” in YAML, and Kubernetes works to maintain that state

- Service discovery: Automatically configures DNS so containers can find each other by name

- Storage management: Mounts persistent volumes and ensures data follows pods even when they move

The most fundamental item on this list is declarative configuration. All the other features derive from this philosophy.

Imperative vs Declarative

This concept is the most unfamiliar thing when you first touch Kubernetes. In the old days, you issued commands in sequence: “Install nginx on server A, deploy the application to server B.” This is the imperative approach.

Kubernetes works differently. We simply describe “what state should exist,” and Kubernetes figures out how to make it happen. This is called the declarative approach.

# A declaration that we want to run 3 replicas of nginx

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx

spec:

replicas: 3

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.25What this YAML says is “keep 3 instances of nginx 1.25 running.” If there are no containers when you first apply it, it creates 3. If 2 already exist, it adds just one more. If one dies, it spins up a new one to maintain 3. Kubernetes does this on its own without being told.

This idea changed the paradigm of infrastructure operations. Instead of worrying about “how to do it,” operators only need to describe “what state should exist.”

Architecture at a Glance

A Kubernetes cluster is divided into two major parts: the Control Plane and the Worker Nodes.

flowchart TB

subgraph CP[Control Plane]

API[API Server]

ETCD[(etcd)]

SCH[Scheduler]

CM[Controller Manager]

end

subgraph W1[Worker Node 1]

K1[kubelet]

KP1[kube-proxy]

P1[Pods]

end

subgraph W2[Worker Node 2]

K2[kubelet]

KP2[kube-proxy]

P2[Pods]

end

USER[User / kubectl] -->|API Request| API

API <--> ETCD

SCH --> API

CM --> API

API <--> K1

API <--> K2The Control Plane is the brain of the cluster. It decides which containers to run where, stores the cluster state, and processes user requests. Worker Nodes are the servers where containers actually run. When the Control Plane says “I’ve assigned this container to you,” the kubelet on the Worker Node executes that instruction.

Users interact with the API Server via the kubectl command, requesting “make it look like this.” The API Server stores that request in etcd, and each component reads the state and does what’s needed. The Scheduler decides which Worker to place new pods on, and the Controller Manager acts to reconcile things when “the current state differs from the declared state.”

If you have a rough picture of this structure in your head, that’s enough. The specific behavior of each component is covered in detail in Part 2.

Pods, Nodes, Clusters

There are three words you need to learn first when studying Kubernetes:

- Pod: The smallest deployable unit that wraps containers. Usually one container per pod, but tightly coupled containers can be grouped together

- Node: A physical or virtual server where pods run. Divided into Worker Nodes and Control Plane Nodes

- Cluster: Multiple nodes grouped together to form a single logical system

To use an analogy: a cluster is an apartment complex, a node is each building, and a pod is each unit within a building. Even though units are scattered across different buildings, they can find each other within the same complex.

Why is a pod one level above a container? It’s for management convenience. Two containers that work closely together (e.g., a main app + a log collector) need to share the same network space and storage, and Kubernetes handles this by grouping them into a pod. We’ll explore this further in Part 3.

Kubernetes Doesn’t Solve Everything

One last thing to address. Kubernetes is powerful, but it’s not a silver bullet.

- The learning curve is steep. This is not a tool you get comfortable with in a few days

- Using Kubernetes for small projects can be over-engineering

- There’s a cost to operating the cluster itself. Someone has to manage the Control Plane too

- Kubernetes won’t fix bugs or design flaws in your application code

Still, when services grow, multiple teams collaborate, and reliability becomes critical, the value of Kubernetes becomes clear. It’s complex at first, but once you get comfortable with it, it’s hard to go back to other approaches.

In the next part, we’ll dissect the inside of the cluster. We’ll look at how the Control Plane components connect and exactly what kubelet does on Worker Nodes.

Loading comments...