Table of contents

- The Graveyard of “It Works on My Machine”

- VMs and Containers Are Different

- The Reality of a Container Is a Linux Process

- Containers Existed Before Docker

- Docker’s Overall Architecture

- Images and Containers — Similar but Different

- Running Your First Container

- What Docker Cannot Solve

- The Road Ahead in This Series

The Graveyard of “It Works on My Machine”

When a developer joins a new team, there is a ritual they almost always go through. Open the README, match the Java version, install PostgreSQL, grab Redis, set up environment variables. Then an error pops up somewhere. A colleague walks by and says, “Oh, that’s because your libssl version is different. I had the same issue.” And just like that, the entire morning is gone.

At larger scale, things get even worse. An app that runs perfectly on a developer’s machine dies the moment it hits the QA server. Digging into the root cause reveals OS version mismatches, minor bugs in system libraries, or case-sensitive file paths. None of it is the code’s fault, yet the code breaks. “It works on my machine” is not a joke — it is a symptom of a structural problem.

Docker attacks this problem head-on. The idea is to bundle everything needed to run an app — code, runtime, system libraries, configuration — into a single package, so it runs identically everywhere. This package is called a container, and Docker is both the company and the tool that built this ecosystem.

VMs and Containers Are Different

One of the easiest things to confuse when first learning Docker is the difference between virtual machines (VMs) and containers. Both seem to provide “isolated environments,” but internally they are entirely different.

flowchart LR

subgraph VM["Virtual Machine (VM)"]

HW1["Hardware"] --> HOS1["Host OS"]

HOS1 --> HYP["Hypervisor"]

HYP --> GOS1["Guest OS 1"]

HYP --> GOS2["Guest OS 2"]

GOS1 --> APP1["App A"]

GOS2 --> APP2["App B"]

end

subgraph CT["Container"]

HW2["Hardware"] --> HOS2["Host OS (shared kernel)"]

HOS2 --> DK["Container Runtime"]

DK --> C1["Container A"]

DK --> C2["Container B"]

C1 --> APPC1["App A"]

C2 --> APPC2["App B"]

endA VM runs a complete, independent OS on top of a hypervisor. Each VM has its own kernel. This makes isolation very strong, but booting takes tens of seconds and each VM consumes memory in the gigabyte range. Spinning up a single VM is similar to buying a small computer.

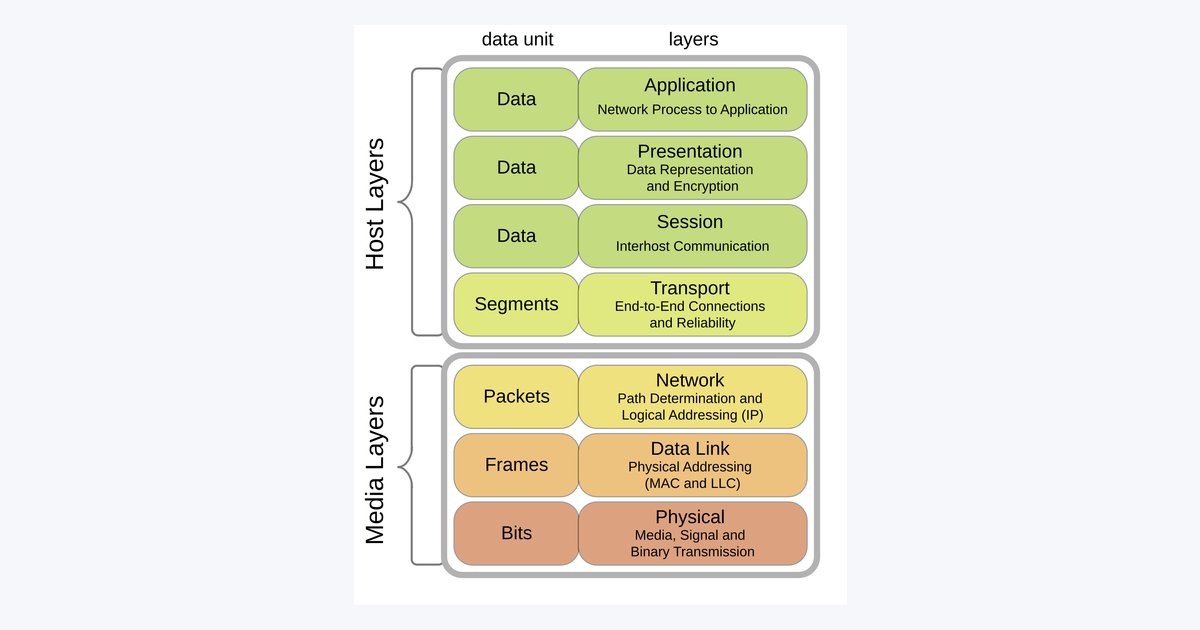

Containers are different. They share the host OS kernel. On top of that, they only apply process-level isolation. Since they do not load an entire kernel, images are lightweight and startup is nearly instant. Spinning up an nginx container takes less than a second.

The difference can be summed up in one line: VMs virtualize the machine; containers virtualize the process.

The Reality of a Container Is a Linux Process

Containers might sound magical, but from the Linux perspective, they are just somewhat special processes. Docker did not invent a new technology — it combines two features that the Linux kernel has provided for a long time.

namespace — Making the World Look Separate

This is the technology that makes a process “think it is alone.” The Linux kernel provides several types of namespaces:

- PID namespace: Isolates the process ID space. PID 1 inside a container might be PID 12345 on the host

- Network namespace: Isolates network interfaces. Each container gets its own

eth0 - Mount namespace: Isolates filesystem mount points. A container sees its own

/ - UTS namespace: Isolates the hostname. A container can have its own hostname

- IPC namespace: Isolates inter-process communication (semaphores, shared memory)

- User namespace: Isolates UID/GID mapping. Root inside the container can be a regular user on the host

You can create a namespace directly using the unshare command. The following command runs bash inside a new PID namespace:

sudo unshare --fork --pid --mount-proc bash

# It looks like you've entered a container

ps aux

# PID USER TIME COMMAND

# 1 root 0:00 bash

# 5 root 0:00 ps auxHundreds of processes are running on the host, but running ps in this shell shows only two. That is what a namespace does.

cgroups — Confining Resources

If namespaces limit “visibility,” cgroups (control groups) limit “how much can be consumed.” They constrain CPU, memory, disk I/O, and network bandwidth on a per-process-group basis.

# Example of limiting memory to 512MB in a cgroup v2 environment

sudo mkdir /sys/fs/cgroup/mygroup

echo "536870912" | sudo tee /sys/fs/cgroup/mygroup/memory.max

echo $$ | sudo tee /sys/fs/cgroup/mygroup/cgroup.procsWhat this does is straightforward: create a cgroup under /sys/fs/cgroup/mygroup, set a 512MB memory ceiling, and place the current shell process into that group. From now on, this shell and its child processes will be killed by OOM if they exceed 512MB of memory.

What Docker does, in the end, is run a process that is isolated with namespaces and resource-constrained with cgroups. It is not magic — it is a combination of Linux kernel features.

Containers Existed Before Docker

The container concept was not invented by Docker. Technologies like FreeBSD jail in 2000, Solaris Zones in 2005, and LXC (Linux Containers) in 2008 already existed. Google had been building cgroups since 2006 and using containers in its internal systems.

However, these technologies were too complex. Setting up a single LXC instance required dozens of lines of configuration and a fairly deep understanding of Linux internals. They were not tools an average developer could easily use.

Docker launched in 2013 as a tool that wrapped LXC and made it easy to use via CLI. A single command like docker run ubuntu accomplished what used to take half a day. Docker later built its own runtime (libcontainer, later runc) to eliminate the LXC dependency, defined an image format, and created a registry to make image sharing easy. It is no exaggeration to say that the popularization of container technology is divided into before Docker and after Docker.

Docker’s Overall Architecture

When you install Docker and type docker run hello-world, quite a lot happens behind the scenes. Let’s look at the big picture first.

flowchart TB

USER["Developer / CI"] -->|docker CLI| CLI[docker CLI]

CLI -->|REST API via socket| DAEMON[Docker Daemon<br/>dockerd]

subgraph HOST["Docker Host"]

DAEMON --> CONTAINERD[containerd]

CONTAINERD --> RUNC[runc]

RUNC --> C1["Container A<br/>(namespace + cgroups)"]

RUNC --> C2["Container B"]

DAEMON --> IMG[("Local Image Store")]

DAEMON --> NET[("Network / Volumes")]

end

DAEMON -->|pull/push| REG[("Docker Registry<br/>Docker Hub, ECR, etc.")]Let’s go through the components one by one.

- Docker CLI: The

dockercommand you type in the terminal. It is essentially a REST client. It converts received commands into API requests and sends them to the Docker Daemon - Docker Daemon (

dockerd): A process that is always running on the host. It manages images, containers, volumes, and networks. By default, it receives requests through the/var/run/docker.sockUnix socket - containerd: The runtime responsible for container execution and lifecycle management. Originally an internal Docker component, it was donated to the CNCF and became an independent project

- runc: The lowest-level runtime that actually starts container processes. It implements the OCI (Open Container Initiative) standard. It is the “real workhorse” that sets up namespaces and cgroups

- Docker Registry: An image storage service. Docker Hub is the most well-known, and there are many implementations such as AWS ECR, GCR, GitHub Container Registry, and self-hosted Harbor

Although this architecture might look complex at first glance, the core idea is simple: the CLI sends requests, the Daemon receives them, and containerd/runc actually start the containers.

Images and Containers — Similar but Different

The most confusing terms when learning Docker are image and container.

- Image: A read-only template containing everything needed for execution. Think of it as a class

- Container: An actual running instance based on an image. Think of it as an object

You can spin up 100 containers from the same image, and each container has an independent filesystem and network.

# Pull an image

docker pull nginx:1.27

# Start containers from the image — multiple from the same image is fine

docker run -d --name web1 -p 8080:80 nginx:1.27

docker run -d --name web2 -p 8081:80 nginx:1.27

# Check running containers

docker psdocker pull downloads an image from a registry, and docker run starts a container process based on that image. Keeping the relationship between these two commands in mind makes all subsequent commands much easier to follow.

Running Your First Container

Reading explanations only gets tedious, so let’s actually run one. Assume you have Docker Desktop or Docker Engine installed.

docker run hello-worldHere’s what this single line does internally:

- Looks for an image called

hello-worldlocally - If not found,

pulls it from Docker Hub - Creates a container based on the image

- Runs the program defined in the container (in this case, prints a welcome message)

- When the program finishes, the container also terminates

The output looks something like this:

Hello from Docker!

This message shows that your installation appears to be working correctly.

...The moment you see this message, Docker is running on your machine. You can check the record of the container you just ran with docker ps -a.

docker ps -a

# CONTAINER ID IMAGE COMMAND CREATED STATUS

# 3f2b9e... hello-world "/hello" 10 seconds ago Exited (0)A container remains in the Exited state after its program finishes. It stays on disk until explicitly removed. This lifecycle will be explored in depth in Part 4.

What Docker Cannot Solve

It is easy to assume Docker is a silver bullet, but it has clear limitations.

- The fact that the Linux kernel is shared: You cannot run Windows containers directly on a Linux host (and vice versa). Docker Desktop works on Windows/macOS because it internally installs a lightweight Linux VM

- Security isolation is not as strong as VMs: Because the kernel is shared, a kernel vulnerability can theoretically enable container escape. In production, supplementary isolation technologies like

gVisorandKata Containersare sometimes used - Application state is not handled by the container: Persistent data like database records or uploaded files must be managed separately with volumes (covered in Part 5)

- Orchestration across multiple servers is insufficient with Docker alone: Docker Swarm exists, but Kubernetes is the de facto standard in practice

Learning Docker is also the process of understanding these limitations — knowing where Docker’s territory ends and where other tools are needed.

The Road Ahead in This Series

In this article, we only covered “why Docker” and “what Docker looks like.” Over the next several parts, we will dig into the following topics in order:

- Part 2 — Images and Layers: How images are stacked, how caching works, and how to reduce image size

- Part 3 — Dockerfile: The syntax and philosophy of building images yourself

- Part 4 — Container Lifecycle: Everything about running, stopping, restarting, and termination signals

- Part 5 — Volumes and Data: Data that survives even when containers die

- Part 6 — Networking: Communication between containers, and between containers and the outside world

Each part will be filled with content you can use in real-world practice right away.

In the next part, we dissect the internals of Docker images. Why images are built in “layers,” why pulling the same image a second time finishes almost instantly, and what the basic techniques for reducing image size are.

Loading comments...